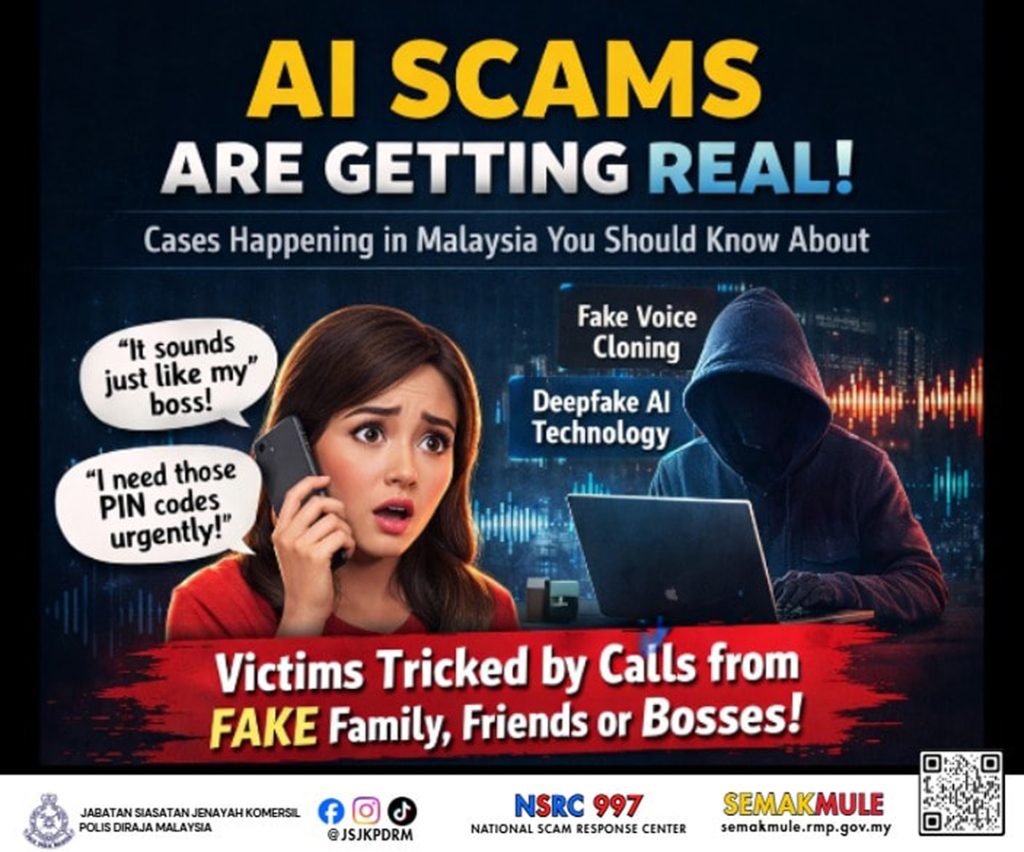

BEWARE OF AI SCAMS

AI scams are becoming more realistic. Criminals are now using Artificial Intelligence (AI) to mimic the voices of bosses, family members, or close friends to trick victims into making immediate money transfers. Don’t get caught.

Common modus operandi:

- AI voice cloning – voices are copied to ask for money or PINs

- Fake emergency calls – claims of accidents or urgent problems

- Immediate payment demands – pressure to prevent victims from thinking

- Deepfake audio/video – voices and faces are fabricated to look like real people

What you should do:

- Raise awareness and be cautious.

- Verify identity: don’t trust voice alone.

- Don’t share personal information: never disclose banking details to anyone over the phone.

- Take time to check: don’t rush into making payments.

Adopt the 3S approach to avoid being scammed:

1. Spot – Ask questions to verify the caller’s identity. If they ask for sensitive info (eg: account numbers, PINs, or OTPs), it’s likely a scam.

2. Stop – Do not share personal information. If the call feels suspicious, hang up immediately.

3. Share – Spread awareness by sharing this information with family and friends. Let’s help each other recognise scam tactics.

If you become a victim:

- Contact your bank’s hotline to try to stop suspicious transactions.

- Report to the National Scam Response Centre (NSRC) at 997.

- File a police report for further action.

Remember: If it sounds unusual or urgent: Spot, Stop, Share. Protect yourself and your family from cybercriminals.

Source: Amaran Scam